How to Build an AI Agent Network for Your Business

AI agent networks are transforming businesses by automating complex workflows through specialized agents. These networks consist of multiple AI agents, each assigned specific roles like data retrieval, decision-making, and task execution. Unlike single-agent setups, multi-agent systems improve efficiency, reduce costs, and handle high volumes of work without fatigue.

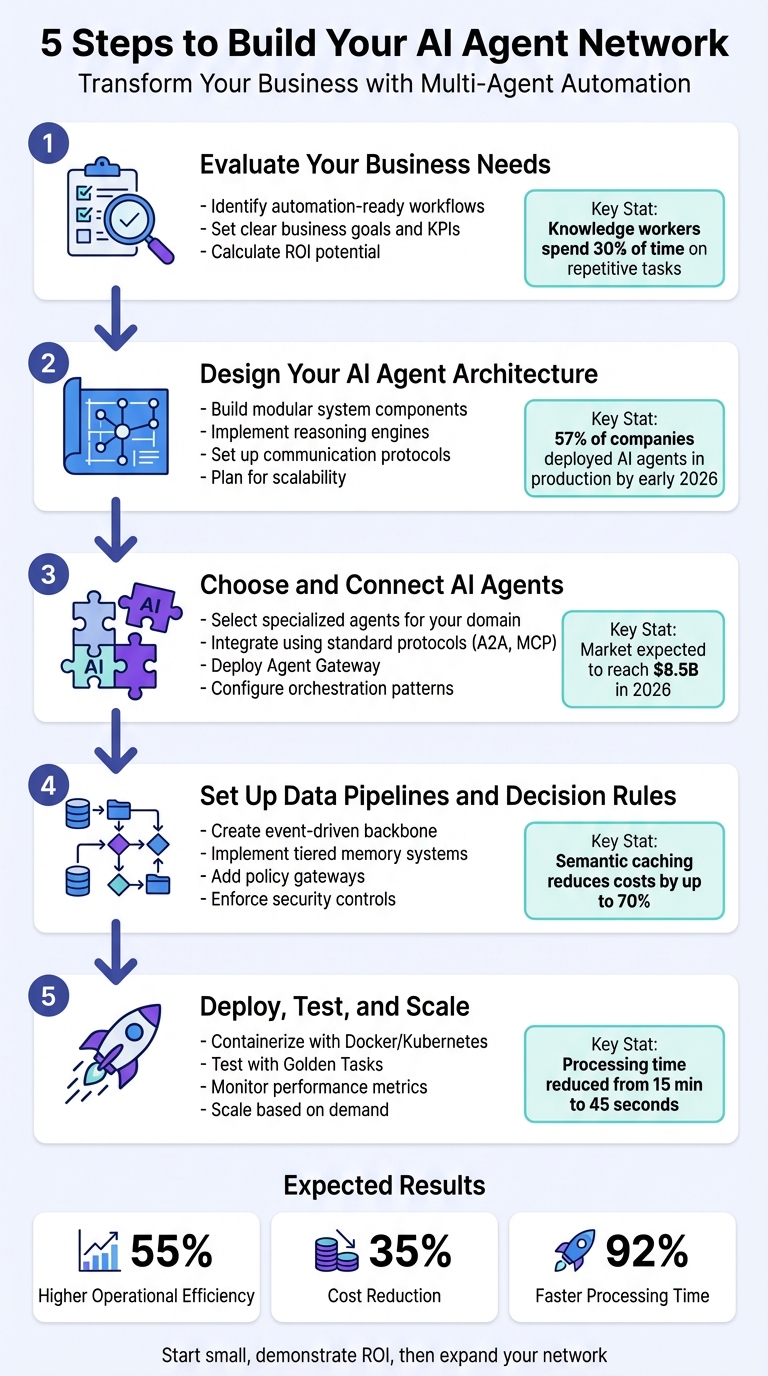

Here’s the process to build your own AI agent network:

- Identify Tasks for Automation: Focus on repetitive, document-heavy, or context-driven workflows like lead qualification, invoice processing, or customer support.

- Set Goals and KPIs: Define metrics like cost savings, time reductions, and accuracy improvements to measure success.

- Design the Architecture: Build a modular system with key components like reasoning engines, memory systems, and communication protocols for smooth agent collaboration.

- Integrate Specialized Agents: Use tools like AgentBandwidth’s agents (e.g., JobVantage for recruitment or ClaimPath for insurance) to match your business needs.

- Optimize Data Pipelines: Set up structured data flows and decision rules to ensure reliability and efficiency.

- Deploy and Scale: Use containerization, Kubernetes, and autoscaling to handle growth while controlling costs.

5-Step Process to Build an AI Agent Network for Business Automation

Step 1: Evaluate Your Business Needs

Find Workflows That Can Be Automated

The first step in integrating AI agents into your business is identifying which workflows make sense to automate. Not every task is suitable. The best candidates tend to be document-heavy, require context-based decision-making, involve unstructured data (like emails or PDFs), or are prone to frequent exceptions where traditional automation tools fall short [8][6]. If your team spends a lot of time interpreting guidelines, making judgment calls, or searching across multiple systems for information, you’ve likely found a process worth automating [8].

Consider this: knowledge workers spend almost a full day each week - 30% of their time - on repetitive tasks and searching for information. This is where the Process Audit Framework becomes invaluable. Use it to evaluate tasks based on:

- Volume: How often does the task occur each month?

- Complexity: How much judgment does it require?

- Variability: How different are the inputs?

- Impact: What are the costs of errors or delays?

- Current Pain: How frustrated is your team with the process?

Tasks with high ROI potential often include lead qualification, customer support triage, invoice and contract processing, and internal knowledge retrieval [9].

Here’s an example: In January 2026, a group of Dutch manufacturers and wholesalers implemented AI-powered order processing agents to handle formats like Excel, PDFs, and emails. This change reduced processing time by 92%, cut errors by 90%, and saved mid-size operations around $3 million annually [8]. Similarly, a retail analytics company replaced a team of 15 employees manually extracting data from product photos with AI agents, saving over $330,000 per year [8]. These examples show the impact of identifying and automating the right workflows.

Set Business Goals and KPIs

Once you’ve identified which workflows to automate, the next step is setting clear business goals and KPIs. Start by calculating ROI: multiply the weekly hours saved by the employee’s hourly rate, then subtract the costs of APIs and platforms [9]. Many organizations report positive ROI within three to six months when focusing on specific AI use cases [8].

Your KPIs should cover different areas:

- Operational metrics: Monitor processing volume, average response time, accuracy rates, and how often tasks escalate to human intervention [8].

- Business impact: Measure total hours saved, cost reductions, and improvements in employee satisfaction [8].

- Quality metrics: Track field extraction accuracy and the "hallucination rate" - cases where AI makes unsupported claims.

For example, an optimized e-commerce chatbot improved customer satisfaction scores from 3.8 to 4.5 while cutting handle time by 30% [10]. These metrics are crucial, especially as task-specific AI agents are expected to be embedded in 40% of enterprise applications by the end of 2026, compared to under 5% in 2024 [9].

"Most businesses are still running on manual processes that eat up 30% of their employees' time on repetitive, mind-numbing tasks." – Jean Bonnenfant, Co-founder, Lleverage [8]

Defining workflows and KPIs sets the stage for designing AI agent architectures that align with your business objectives.

sbb-itb-8bb7924

Give Me 28 Minutes and I'll Completely Change the Way You Build AI Agents

Step 2: Design Your AI Agent Architecture

Once your workflows and KPIs are set, the next step is to design an architecture that aligns with these business objectives.

Core Components of an AI Agent Network

A well-structured AI agent network relies on four key elements: the Agent (with defined roles and tools), the Orchestrator, the Communication Layer, and the State Store [14]. Think of it as a team where every member has a specific role and works in harmony.

At the heart of each agent is its Reasoning Engine, powered by a Large Language Model (LLM) that interprets instructions and decides on actions [12]. The Orchestrator ensures smooth execution, handling tasks and resolving errors as they arise [11][12]. To manage data access, a Tool Registry provides a standardized way for agents to interact with APIs and databases, often using schema-first approaches like JSON or Pydantic to maintain proper data flow [11][5]. For memory, agents leverage a mix of short-term memory for immediate tasks, episodic memory stored in vector databases for past interactions, and semantic memory to house proprietary knowledge [15][5].

Two protocols are essential for a seamless setup. The Model Context Protocol (MCP) acts as a universal interface, enabling agents to connect with tools and data sources [13]. Meanwhile, the Agent-to-Agent Protocol (A2A) allows agents to communicate horizontally, using structured "Agent Cards" for discovery and coordination. These standards eliminate the need for complex custom integrations, making it easier to adapt to new tools and technologies.

"Reliability is a System Property, not a Model Property." - Sudeep, AI Solutions Architect [1]

With these components in place, the focus shifts to ensuring scalability and smooth integration with your existing systems.

Plan for Scalability and Integration

Your architecture should not only meet technical requirements but also align with your business goals and KPIs. A modular design is key - it prevents context overload and simplifies testing and maintenance [1]. For instance, in February 2026, AI NeuroSignal, a financial intelligence platform, deployed a 20-agent system to analyze signals across 100+ markets. By using an Elo rating system to weigh agents’ contributions based on historical accuracy, they cut false signals by 73% compared to their previous single-agent setup. The orchestrator managed 20 simultaneous API calls in under two seconds [16].

To support scalability, design agents to be stateless, relying on external storage systems like DynamoDB or PostgreSQL for persistent state management [13][14]. Use circuit breakers to isolate failing agents, preventing cascading failures and reducing unnecessary API costs [16][5]. Employ tiered activation to call only the agents needed for a task, rather than activating the entire network [16][3].

For integration, adopt a schema-first approach when designing tools. Clearly define names, descriptions, and typed input/output schemas for every tool your agents use [15][5]. This, combined with layered safety checks - such as input validation (e.g., PII scrubbing), role-based access control during tool calls, and output consistency filters - helps maintain data accuracy and prevents errors. By early 2026, 57% of companies had deployed AI agents in production, with the market expected to reach $8.5 billion that year [13]. Starting with modularity and standardized protocols ensures your network can grow alongside your business needs.

Step 3: Choose and Connect AgentBandwidh AI Agents

Once your modular architecture is in place, the next step is selecting AI agents that fit seamlessly into your business workflows. AgentBandwidh offers a suite of specialized agents designed for autonomous decision-making. These agents can either operate independently or work together as a network, adapting to your specific needs.

AgentBandwidh's AI Agents Overview

AgentBandwidh provides eight specialized agents, each tailored to specific domains. Here's a quick look at what they offer:

- JobVantage: Simplifies job searches by automating application tracking, detecting ghost listings, and creating tailored resumes.

- EdgeFlow: Analyzes markets to find arbitrage opportunities, assess risks, and generate actionable insights.

- ClaimPath: Manages health insurance appeals by parsing Explanation of Benefits (EOBs), calculating deadlines, and drafting compliant appeals.

- EquityIQ: Models startup equity scenarios, tracks vesting schedules, parses term sheets, and provides alerts for incentive stock options (ISO).

- GameForge: Focuses on creative workflows, handling game design assets and content drafting.

- MealForge: Optimizes meal planning by balancing nutrition and budget constraints.

- SubScan: Audits subscriptions, monitors price changes, and automates cancellations to save costs.

- Court Support: Tracks legal deadlines and generates compliance documentation to prevent procedural errors.

Each agent operates using four key roles: Retriever, Extractor, Validator, and Action Agent. These roles ensure smooth data gathering, parsing, compliance checks, and system updates [2].

Connect Agents to Your Workflow

To integrate these agents into your operations, AgentBandwidh employs a network architecture that uses standard protocols for capability discovery via AgentCards. This enables secure and consistent connections through A2A (Agent-to-Agent) and MCP (Multi-Agent Communication Protocol) [17][18].

Deploy an Agent Gateway to handle tasks like semantic routing, authentication, and multiplexing. This prevents communication bottlenecks and ensures efficient data flow [18]. For better monitoring, integrate OpenTelemetry to trace events across agents, diagnose issues, and manage operational costs [17][18]. Use structured JSON payloads to maintain clarity and validation in inter-agent communication [1][2].

When orchestrating workflows, choose a pattern that suits your complexity:

- Sequential: Ideal for fixed pipelines where tasks follow a specific order.

- Concurrent: Allows parallel checks or validations for faster processing.

- Router: Dynamically assigns tasks based on context, such as directing insurance-related inquiries to ClaimPath or legal matters to Court Support.

For example, a customer service workflow could combine a Router pattern to triage inquiries with EdgeFlow running concurrent market scans. To manage resources effectively, implement circuit breakers to limit token usage and iterations [1].

Agent Features and Benefits Comparison

To help you decide which agents to deploy, here's a comparison of their focus areas, features, and benefits:

| Agent | Domain Focus | Key Features | Primary Benefits |

|---|---|---|---|

| JobVantage | Employment | Job search, ghost listing detection, resume creation, application tracking | Saves time, filters irrelevant opportunities |

| EdgeFlow | Finance | Market scanning, arbitrage detection, risk evaluation, signal generation | Identifies profit opportunities, reduces risk |

| ClaimPath | Healthcare | Denial parsing, appeal drafting, EOB auditing, deadline calculation | Recovers claims, ensures compliance |

| EquityIQ | Startups | Term sheet parsing, vesting schedule tracking, exit modeling, ISO alerts | Simplifies equity management |

| GameForge | Creative | Asset generation, design briefs, content drafting | Speeds up creative production |

| MealForge | Lifestyle | Meal planning, grocery optimization, nutrition checks, budget tracking | Balances nutrition and costs |

| SubScan | Operations | Subscription auditing, price monitoring, cancellations | Reduces recurring expenses |

| Court Support | Legal | Legal document review, deadline tracking, compliance generation | Avoids missed deadlines |

Ensuring Secure and Efficient Operations

To maintain security, enforce least privilege access. For example, Retriever agents should have read-only permissions, while Action agents get limited write access to specific endpoints [2]. For high-risk actions like payments over $10,000 or contract changes, set up Human-in-the-Loop (HITL) approvals [2][4]. This balances the speed of automation with necessary safety measures.

Step 4: Set Up Data Pipelines and Decision Rules

For your AI agents to function efficiently, you need reliable data flows and well-defined decision-making frameworks. Without these, errors, inefficiencies, and unnecessary delays can creep in, leading to higher costs. This step is all about creating the infrastructure that ensures smooth and precise operations.

Create Data Pipelines for AI Agents

Start by building an event-driven backbone using tools like Kafka, NATS, or Redis Streams. These tools handle typed events (e.g., TaskRequested, TaskCompleted), allowing agents to communicate in structured formats. To avoid misunderstandings, define "Structured Intents" with schemas (e.g., JSON Schema or Protobuf). For example:

{"intent": "CODE_REVIEW", "priority": "HIGH"}

This clarity helps agents interpret tasks without ambiguity.

Organize data storage into tiered memory systems for efficiency. Use Redis for short-term memory, which is perfect for active session states and tasks requiring ultra-fast access. For long-term memory, rely on vector databases like Pinecone or Qdrant to store historical data, user preferences, and institutional knowledge. Relational databases such as Postgres or MySQL are ideal for maintaining audit trails. This multi-layered setup reduces the time spent retrieving data, which can account for 40% to 50% of workflow execution time [19][20].

To cut costs, implement semantic caching (e.g., Redis LangCache) to reuse LLM outputs, potentially reducing inference expenses by up to 70%. Set aggressive Time-To-Live (TTL) limits - typically 15 to 60 minutes - for session data in Redis to keep pipelines efficient. Instead of storing raw chat logs, save only curated facts, decisions, and summaries in vector databases to minimize overhead.

Policy gateways are essential for validating tool calls before execution. These gateways check for issues like data masking, rate limits, and schema compliance, ensuring malformed queries are blocked. Wrap tool responses in structured envelopes (e.g., { ok, data, error, meta }) that include details like execution time, tool name, and cache status.

Once your data pipelines are optimized, the next step is to establish clear decision-making rules for safe and effective operations.

Add Decision-Making Rules

With robust data pipelines in place, it's time to implement strict decision-making protocols. Store business rules in machine-readable formats like YAML or JSON. This "Policy-as-Code" approach ensures rules are enforced deterministically, outside the reasoning capabilities of the LLM. For example, require human approval for high-stakes actions like payments exceeding $10,000 or contract modifications.

Limit agent access to only the tools and data they need. For example, a Retriever agent should have read-only permissions, while Action agents might have scoped write access to specific endpoints. This "least privilege" approach minimizes potential damage if an agent malfunctions.

Set up circuit breakers to prevent runaway costs. For example, impose iteration limits on agent loops to avoid infinite cycles. Studies show that orchestrated multi-agent systems deliver 100% actionable recommendations, compared to just 1.7% for uncoordinated single-agent setups. However, up to 40% of agentic AI projects may be canceled by 2027 due to underestimated operational complexities [19].

"Reliability is a System Property, not a Model Property." – Sudeep, Founder, ShShell.com [1]

For irreversible actions like database deletions, financial transactions, or contract changes, enforce Human-in-the-Loop (HITL) controls. Monitor tool-call sequences closely to avoid deadlocks and ensure the system operates smoothly.

Step 5: Deploy, Test, and Scale Your Network

With your data pipelines and decision rules set, it's time to put your AI agent network into production. This stage focuses on creating a reliable deployment setup, ensuring everything works as intended, and preparing your system to handle growth without spiraling costs or disruptions.

Prepare Your Deployment Environment

To ensure smooth deployment, containerize your agents using Docker. This packages models and dependencies into isolated, lightweight units. Pair this with Kubernetes for features like self-healing, service discovery, and automated rollouts. Use "Deployments" for agents that need to run continuously (e.g., monitoring or routing tasks) and "Jobs" for scheduled or batch operations. Include liveness probes to restart stuck agents and readiness probes to ensure agents only handle traffic once fully operational.

"Docker makes agents portable; Kubernetes makes them reliable." – Valentina Vianna, Community Manager, Bix-Tech

For visibility into system performance, use Prometheus and Grafana to track metrics. Store agent configurations, prompt templates, and tool definitions in Git, enabling a GitOps workflow. This approach simplifies rollbacks and ensures an audit trail for changes.

Security should be a priority from day one. Use Kubernetes NetworkPolicies to restrict which external APIs agents can access, and implement secrets management to secure API keys. To control costs, set token budgets for each agent and workflow, as multi-agent setups can be 10–40 times more expensive than single-agent solutions [22].

These foundational steps set the stage for rigorous testing and a stable, production-ready network.

Test Performance and Reliability

Before launching, conduct thorough testing with representative "Golden Tasks." Use an LLM to grade outputs against a strict rubric. To avoid infinite reasoning loops that could drain your token budget, implement circuit breakers and set iteration limits (typically 5–10 steps) [23].

When updating your system, choose a deployment strategy based on your risk tolerance:

- Canary deployments: Roll out changes to a small user group first, allowing you to catch errors before a full rollout.

- Shadow deployments: Route live traffic to a new agent version without returning its responses to users. This captures outputs for offline comparison but can double your LLM API costs during validation [21].

Here's a quick comparison of deployment strategies:

| Deployment Strategy | Risk Level | Cost | Best For |

|---|---|---|---|

| Rolling Update | Medium | Low | Minor bug fixes and infrastructure updates |

| Blue-Green | Low | High | Major version changes or API-breaking updates |

| Canary | Very Low | Medium | Prompt updates and model swaps |

| Shadow | None | Very High | High-stakes or safety-critical logic |

One legal tech startup implemented a four-stage pipeline (Extraction, Analysis, Summary, Validation) for contract processing. This system reduced processing time from 15 minutes (manual) to just 45 seconds per contract, costing $0.80 per document. Impressively, the validation stage caught up to 94% of hallucinations from generation agents [22].

Scale Your Network as You Grow

Once performance is verified, it's time to scale. Traditional CPU-based autoscaling often falls short for AI agents, as they are typically I/O-bound, spending most of their time waiting for API responses. Even under heavy loads, CPU usage may hover around just 5% [21]. Instead, use Kubernetes Event-Driven Autoscaling (KEDA) to scale based on queue depth. This allows agent pods to scale down to zero during idle periods and ramp up quickly when needed.

"Scaling AI agents requires addressing infrastructure, orchestration, and design challenges." – AgentCenter Team

For large-scale operations, organize agents into specialized pools, such as Research, Engineering, and Operations, allowing each to scale independently based on demand. For example, an e-commerce company handling over 50,000 monthly support tickets deployed a hierarchical system with a Supervisor agent and four specialists. This setup achieved 91% routing accuracy and improved customer satisfaction by 23% after three months of tuning [22].

To monitor performance, track agent-specific metrics like reasoning step counts, tool-call success rates, and token usage per task. In Kubernetes, set a generous terminationGracePeriodSeconds (60–120 seconds) to allow agents enough time to finish complex tasks before shutting down pods.

The multi-agent AI market is expected to grow from $7.84 billion in 2025 to $52.62 billion by 2030 [24]. However, nearly 40% of these projects may face cancellation by 2027 due to underestimated complexity and costs [19]. Building a successful network requires viewing reliability as a system-wide property. This means focusing on careful engineering, clear architecture, limited autonomy, strong safeguards, and ongoing monitoring [5].

Conclusion: Start Building Your AI Agent Network

Creating an AI agent network addresses practical business challenges by implementing targeted, coordinated automation. This guide has detailed five essential steps to establish a dependable and scalable system.

The shift from single-agent methods to dynamic, multi-agent networks is transforming how businesses operate. Specialized agents - whether for billing, technical support, or sales - consistently outperform generalist systems. Plus, parallel processing can cut task times from four minutes to just one [3]. Companies using AI agents report an average of 55% higher operational efficiency and a 35% reduction in costs [26]. The market for AI agents is also expected to grow significantly, reaching $47.1 billion by 2030 [25].

"Throwing agents into workflows without orchestration is like putting instruments on stage without a conductor; you won't get music, just noise." – Fastn AI [25]

These results highlight why adopting a modular AI network is no longer optional - it’s a strategic advantage. Start small with AgentBandwidth’s ready-to-use solutions. Whether it’s JobVantage for recruitment, ClaimPath for health insurance appeals, or SubScan for subscription auditing, each agent is designed to excel in a specific domain. As your business evolves, you can easily integrate additional agents without overhauling the entire system. That’s the beauty of a modular setup.

The question isn’t whether AI agents will make an impact - it’s how quickly you can deploy them responsibly [7]. Start with one high-demand workflow, demonstrate the ROI, and then expand. Your network grows alongside your business, achieving in hours what used to take days. Take the first step today to boost efficiency and drive meaningful growth for your organization.

FAQs

What’s the best first workflow to automate with AI agents?

When you're considering your first workflow to automate with AI agents, focus on high-value, repetitive tasks that consume a lot of employee time. Think along the lines of manual data entry, routine customer support, or document processing. These types of tasks are ideal because they typically have clear objectives, measurable outcomes, and can deliver a noticeable impact.

Starting with these straightforward processes allows for quick efficiency improvements, an easier implementation process, and a solid demonstration of ROI. Plus, it sets the stage for tackling more advanced automation projects down the road.

How do I keep agents from hallucinating or taking risky actions?

To make AI agents more accurate and dependable, it’s crucial to build safeguards into their design. One effective approach is Retrieval-Augmented Generation (RAG), which helps AI access accurate, up-to-date information, reducing errors in its outputs.

Beyond that, set clear boundaries for the AI. This means outlining forbidden actions, implementing safety controls, and establishing limits on costs and acceptable failure rates. These measures act as guardrails, ensuring the AI operates within a safe and predictable framework.

Testing and evaluation also play a huge role. Use multi-layered evaluations and scenario-based testing to simulate potential problems and identify weaknesses. Combine this with real-time monitoring to catch and address issues as they arise, even after deployment.

Finally, adjust the level of autonomy based on the potential risks involved. By aligning the AI’s decision-making freedom with the complexity and stakes of its tasks, you can create agents that are both safer and more reliable.

What will it cost to run a multi-agent network at scale?

Running a large-scale multi-agent network can come with hefty costs, influenced by factors such as infrastructure, system architecture, and resource management. Key expenses typically include cloud computing, data storage, and orchestration tools. For enterprise-level deployments, where agent numbers scale into the thousands or beyond, costs can climb into the millions of dollars annually.